The shift from x86 to ARM is about power, not just performance

Switching from an Intel iMac to Apple Silicon felt like a hardware upgrade. It turned out to be an architecture story, and that story is now reshaping the cloud.

The new iMac is about half the weight of the one it replaced. I noted that in an earlier post and left it there, a physical curiosity. What I did not fully grasp at the time was that the weight difference is not a design choice. It is a consequence of a decision made in 1985 by a British company called Acorn Computers.

A short YouTube video made it click.

Two languages

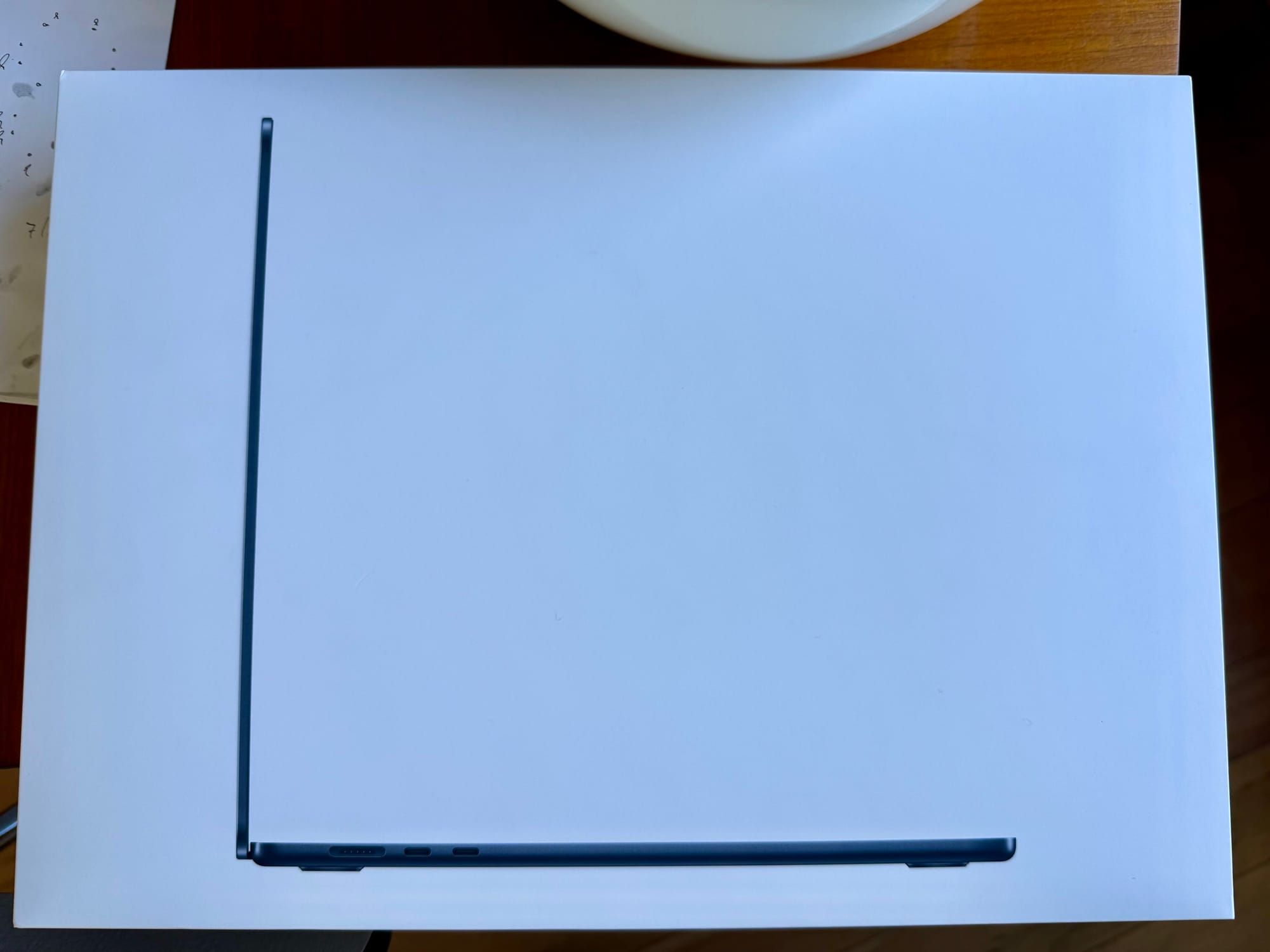

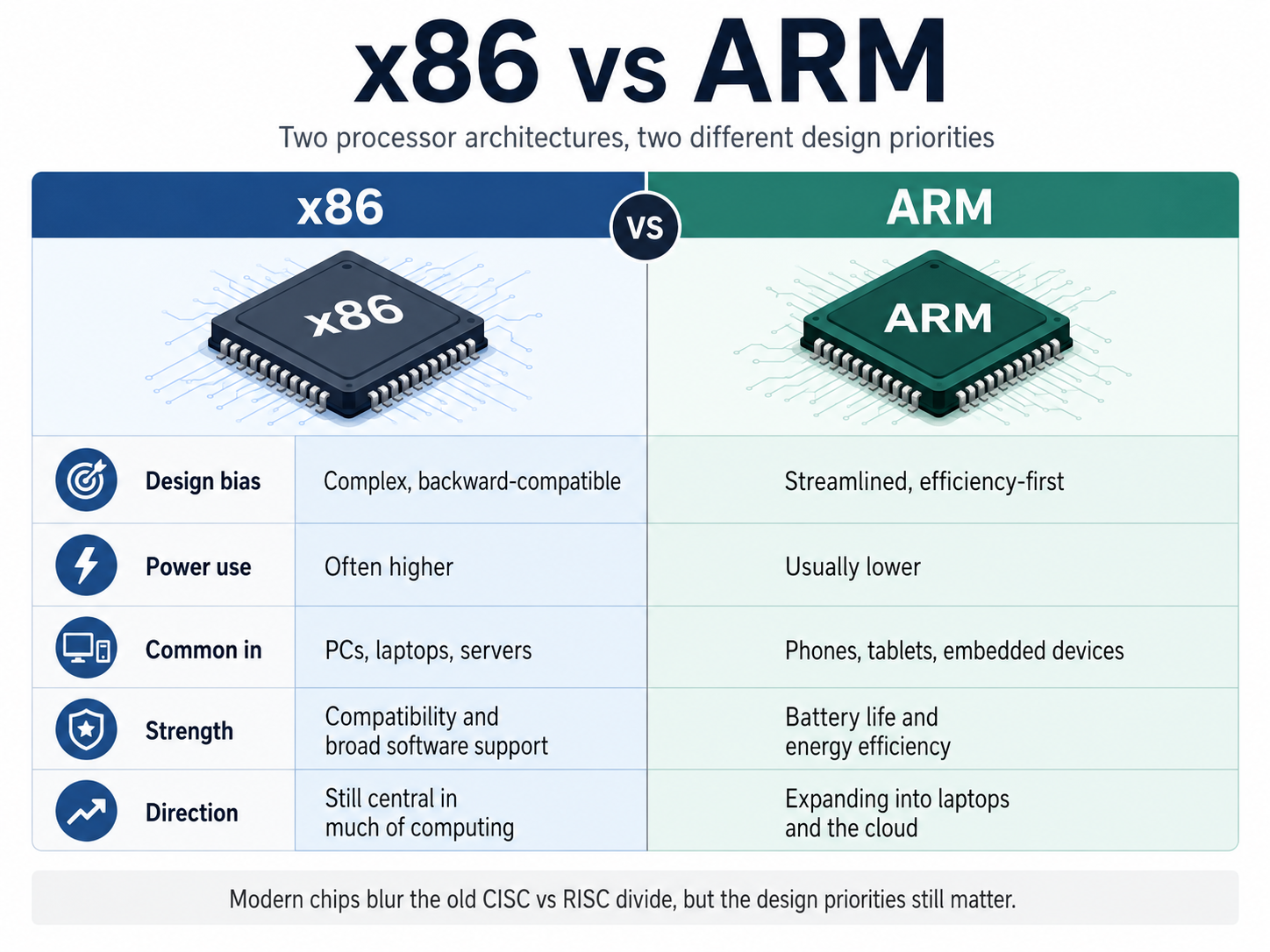

Every processor speaks a language. The architecture defines that language: what instructions the chip understands, how it processes them, how it talks to the rest of the machine. x86 and ARM are simply two different languages. Same job, very different dialects.

x86 goes back to 1978. Intel's 8086 chip started a family tree that runs unbroken to every Windows PC, every AMD Ryzen, every server rack powering Google and Netflix today. Forty-five years of backward compatibility, each generation carrying the weight of the previous one.

ARM came seven years later, from a different starting point. Acorn's engineers wanted a chip that was simpler, faster, and used less power. They called it the Acorn RISC Machine. The insight was almost contrarian: instead of building chips that could handle complex instructions in a single step, strip it down to simple ones executed quickly and cheaply. Most programs, it turned out, never needed the complexity anyway.

Where the weight goes

x86 chips are CISC: complex instruction set computing. A single instruction can do multiple things at once. That capability requires elaborate decoding hardware, transistors devoted not to computing but to figuring out what they have been asked to compute. At billions of operations per second, that overhead is not trivial.

ARM is RISC: reduced instruction set computing. Fixed-length instructions, a simpler decoder, more transistors left over for actual work. The result: ARM chips do less per instruction but do it faster, cooler, and on far less power. A typical x86 desktop chip draws between 65 and 250 watts. A phone-grade ARM chip draws 1 to 15.

That difference is why every smartphone runs ARM. You cannot run an x86 chip on a small battery. It would drain in an hour and burn your hand. And it is why the new iMac is half the weight of the old one. Apple Silicon is ARM. The lightness is not aesthetic. It is physics.

The same logic, at scale

What surprised me, reading further, is that the efficiency argument does not stop at the desk.

Amazon Web Services has been deploying its own ARM-based Graviton processors in data centers, claiming up to 40% better price-performance compared to equivalent x86 options. At data center scale, lower power consumption translates directly into electricity costs. The logic that makes a laptop last eighteen hours on a charge also makes a server hall cheaper to run.

The cloud is not a single machine. It is tens of thousands of them. ARM's advantage compounds accordingly.

Convergence

The cleaner story would be: ARM wins, x86 fades. The actual story is more interesting.

Intel has added efficiency cores to its processors, smaller and simpler, borrowing directly from RISC thinking. ARM chips like Apple's M-series are no longer just efficiency champions; they are closing the gap on raw performance. The two architectures are converging, each absorbing what the other does well.

x86 still leads in PC gaming, high-end creative workstations, and the entrenched server ecosystem. That will not shift quickly. Forty-five years of software compatibility is not something you dissolve in a product cycle.

But the direction is clear. As energy efficiency becomes a constraint, at the battery level and at the grid level, ARM's fundamental design advantage becomes more relevant, not less.

The lighter machine

In the first post about this iMac, I wrote that local computers are becoming lighter, replaceable instruments. The ARM architecture is part of why that is true in a literal sense. Less heat, no fan required, a thinner chassis, half the weight.

I assumed there was still a clear distinction between phone chips and Mac chips. Apple Silicon in a Mac meant M-series: ARM as the foundation, but scaled up, redesigned, a different member of the family. The iPhone stayed in one category, the Mac in another.

The MacBook Neo ended that story. It is the first Mac to use an A-series chip, the same family found in the iPhone, rather than the M-series chips in other Macs. Reports suggest the first production run used binned A18 Pro chips, originally intended for the iPhone 16 Pro. Apple is shipping a laptop powered by recycled phone silicon. And it sells for $599.

The A18 Pro is not a compromise chip. It runs at M3-to-M4 class performance for single-threaded work.

The boundary between phone and computer, already blurry at the architecture level, has now dissolved at the product level too. What started as a design principle in 1985, do more with less, has worked its way from pocket to desk to data center. The lighter machine was never just about weight.