The EU Age Verification App Was Designed to Be Distrusted

The EU Age Verification App is technically sound and communicated all wrong. It buried the upside, led with fear, and launched a framework as a product.

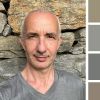

The announcement slide said "NO TRACEABILITY" in large capitals on a blue background ringed with gold stars. The Threads comment section replied: "How do we verify children, ah yes let's make big database of all people."

The architecture is the opposite of a database. The communication guaranteed nobody would notice.

What they built

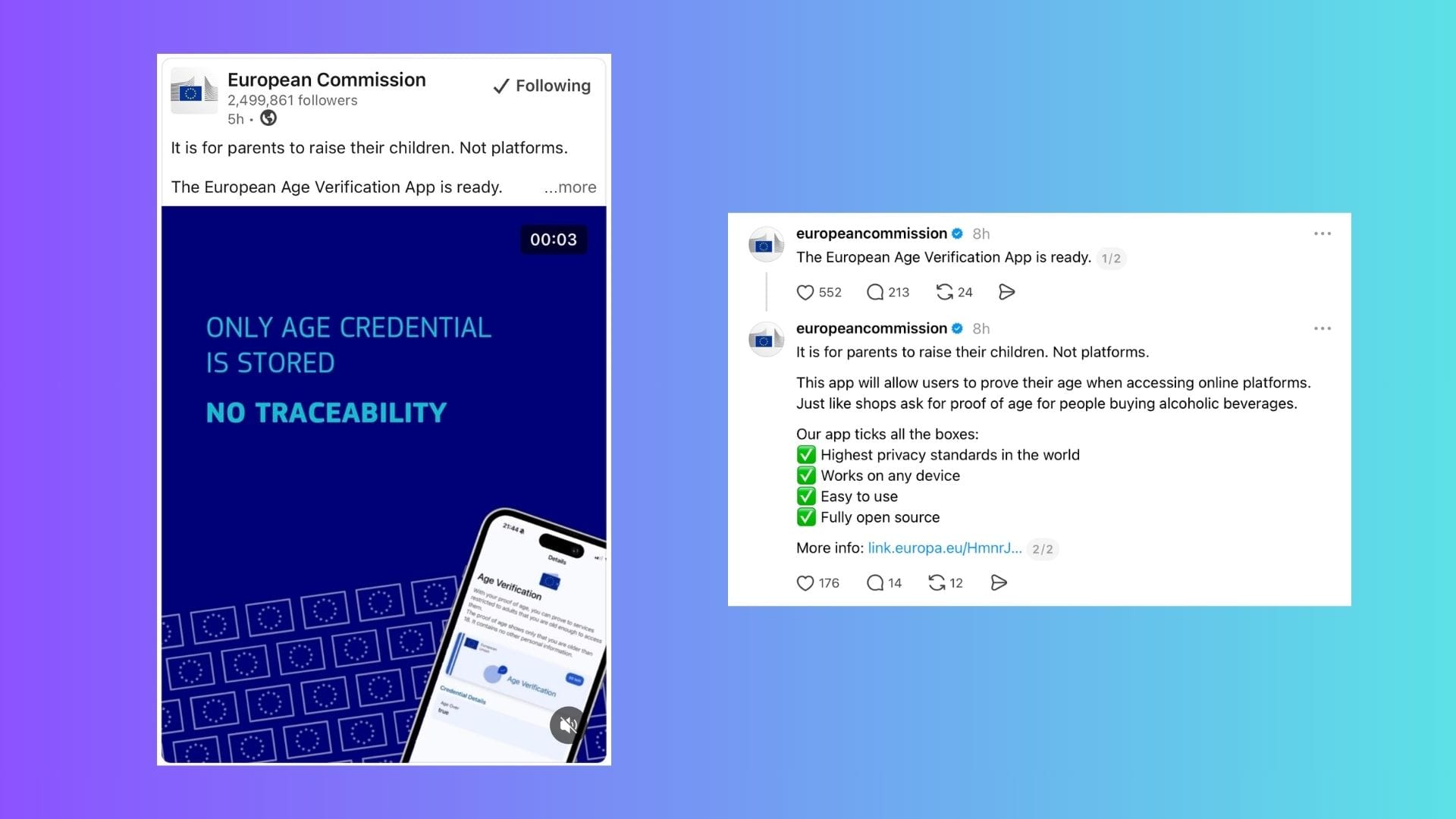

The Age Verification App is a "mini wallet," built on the same technical foundation as the forthcoming EU Digital Identity Wallet. When a platform requests age verification, the app generates a cryptographic proof derived from a credential issued by a national identity authority. The platform receives one binary answer: over 18, or not. No name, no date of birth, no address. The underlying identity data never leaves your device.

The same mechanism works offline. At a festival gate, a bar, a tobacconist. You present your wallet, the venue's device gets a yes or no, and sees nothing else. Most adults have handed a passport to a bouncer to prove they are old enough to enter, giving a stranger full sight of their identity because the system had no finer instrument. This changes that.

The credential is cryptographically bound to the issuing device. It cannot be copied or handed to someone else; the proof would fail. The weak point is onboarding: if someone sets up a wallet using another person's ID document, the credential reflects that person's age. A process problem, not a cryptographic one.

Two failures, one root cause

The Commission made two distinct mistakes, and they compound each other.

The first is aesthetic. Blue backgrounds, gold stars, caps-lock claims. This is the design language of an institutional poster, activating exactly the distrust it was meant to address. Design communicates before words do. A system built on cryptographic privacy, dressed in the visual language of state surveillance, loses the argument before it starts. Estonia's e-Residency programme, adjacent identity infrastructure, answered three questions before anything else: what is it, who is it for, how do I get one.

The second is structural. "The European Age Verification App is ready" is product language. It implies: find it, download it, use it. What actually launched is an open-source blueprint that member states can adapt, currently in pilot in six countries, dependent on national identity infrastructure that varies by country, with no App Store link, no rollout date, no answer to the question any citizen would ask: what do I actually do with this, and when?

Both failures share a root. The Commission framed this entirely as a child protection measure. "It is for parents to raise their children. Not platforms." Every word is defensive. It excludes every adult who benefits from not uploading a passport scan to access a service, or not handing their full identity to a bouncer at a festival. The genuine upside, comfort, convenience, less data exposed in daily life, went unspoken.

The real circumvention problem

The Threads commenter who noted "VPNs are also ready" was pointing at something real, though not quite in the way they meant. The cryptographic proof cannot be spoofed or transferred. But a VPN that makes a user appear to browse from outside EU jurisdiction sidesteps the obligation to verify at all. The circumvention is not of the architecture; it is of the enforcement.

This is a DSA problem, not a technical one. Whether platforms are compelled to integrate the system, and whether that compulsion reaches beyond EU borders, are open questions the announcement did not address. The blueprint closes one circumvention route. Jurisdiction closes another only if enforcement follows.

The unlaunched product

The technical work is done, and it is genuinely good. What has not started is the communication work: framing this around the adult user who wants to prove their age without surrendering their identity. That person also happens to satisfy the DSA, protect children, and reduce platform liability. The upside was always there. The Commission chose not to use it.

A system designed to preserve privacy should not have to overcome the impression that it destroys it. That gap is not a technical problem. It is a choice.

Discussing this on LinkedIn if you want to weigh in.