A Personal Retention Strategy for ChatGPT

From digital scarcity to data abundance, and why I now choose deliberate reset over permanent retention in ChatGPT.

I work daily with large language models, and with ChatGPT in particular. It has become part of how I think, draft, compare perspectives, and test ideas.

But there is a side effect: it accumulates. Conversations remain visible. Threads multiply. The system keeps a trail of exploratory thinking that was never meant to be permanent.

Recently, I decided to approach that accumulation more deliberately. Not as a technical problem, but as a matter of habit.

Quick takeaways

- Data accumulation has psychological effects, even when storage is cheap.

- ChatGPT offers limited middle ground between “keep everything” and “delete everything”.

- Periodic, deliberate clearing works better for me than continuous trimming.

- Cognitive hygiene is part of responsible AI use.

- Personal practice shapes how we later think about organisational data governance.

How ChatGPT handles conversations

In practical terms, the options are relatively simple.

Conversations are stored by default. You can archive them, but that mainly changes their visibility. They still exist in the account environment.

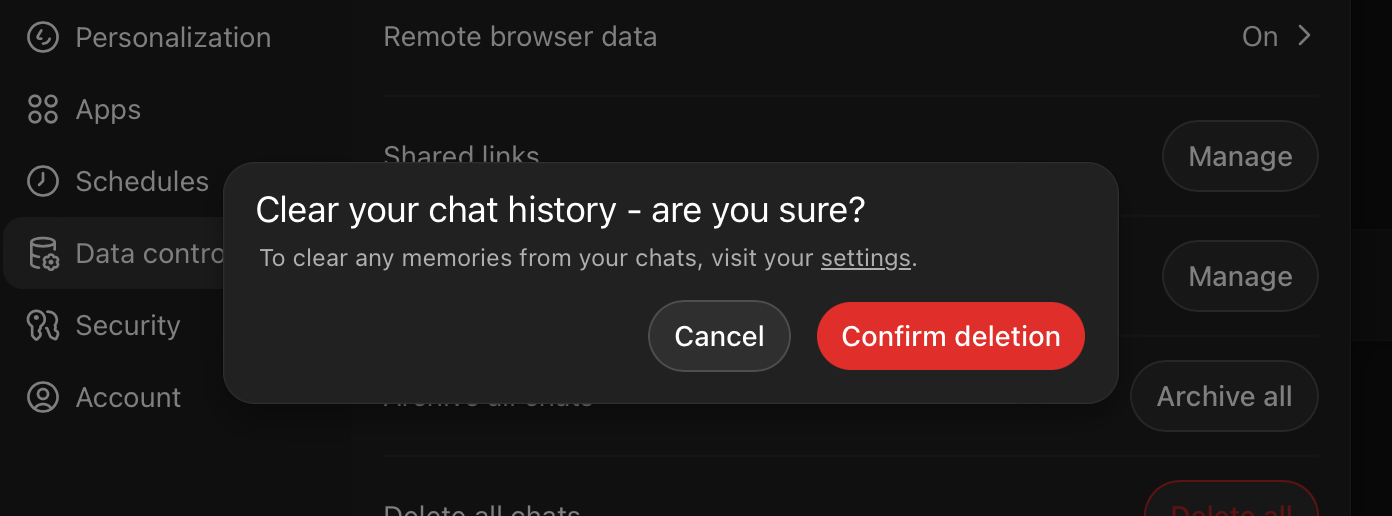

There is also a more radical option: deleting chats, or deleting all chats. That removes them from the interface and reduces what remains available for continuity across threads.

In the Plus environment, there is some cross-thread awareness and temporary memory. If you clear everything, you also lose that contextual continuity. In the Business environment, memory behaves differently, but the structural choice is similar: retain broadly, or remove decisively.

There is no refined “delete everything older than three months” button. No nuanced retention schedule. It is either accumulation by default, or active intervention.

That simplicity forces a decision.

The psychology

My instinct to save everything did not come out of nowhere. It was shaped in an earlier phase of the internet.

There was a time when storage was limited. Hard drives were small. Backups were manual. An export could genuinely save your work, your correspondence, your history. Losing data meant real loss. So you learned to preserve. To copy. To archive. To keep local control.

That reflex made sense then. It was protective.

The environment has changed completely. Storage is abundant. Cloud systems retain by default. Platforms are designed to accumulate. Conversations, drafts, metadata, versions, everything remains somewhere.

Paradoxically, scarcity trained us to save carefully. Abundance now tempts us to save indiscriminately.

I noticed that I was still operating with the old reflex in a new context. Keeping everything no longer protected me from loss. It simply increased volume.

Letting go, in an era of automatic retention, feels counterintuitive. Deleting something that is effortlessly stored can feel almost irresponsible.

And yet, for me, it has become the more disciplined act.

Not because data is dangerous in itself, but because unlimited retention creates a diffuse environment. The value of a conversation often lies in what it enabled, not in its permanent storage.

Once that became clear, the old reflex began to loosen.

Hygiene and rhythm

I experimented with gradual pruning. It kept the topic alive in my mind. There was always something to clean up.

What works better for me is a tidal approach. A period of active use. Then a deliberate session of consolidation and clearing.

Before deleting, I sometimes export or summarise what still matters. Often, I realise that very little truly needs to be retained. The core ideas have already moved into notes, documents, or articles.

Pressing delete is slightly uncomfortable. There is always a moment of doubt. But the effect afterwards is consistent: the interface feels lighter. The tool feels more like a workspace and less like an archive.

The business implication

This is not only personal preference.

In professional contexts, we discuss AI governance, retention policies, compliance, and risk. Those discussions are necessary. But they remain abstract if not grounded in practice.

If we treat conversational AI as a permanent cognitive archive, we implicitly accept indefinite retention of exploratory thinking. If we treat it as a workspace, we introduce rhythm, boundaries, and completion.

For me, the choice to clean out regularly is aligned with how I now treat data more broadly. Keep what has structural value. Remove what has served its purpose.

That stance affects how I think about organisational data as well. Stewardship begins with habit.

Closing

I no longer aim to preserve every intermediate step of thinking.

ChatGPT is a powerful amplifier. But amplification does not require permanence.

Periodic reset is not a rejection of the tool. It is a way of keeping it usable, proportionate, and aligned with how I want to work.